libreswan is now older than openswan was at the time of the forced rename to libreswan. Please stop using openswan. It is not safe to use!

libreswan is now older than openswan was at the time of the forced rename to libreswan. Please stop using openswan. It is not safe to use!

UPDATE: The police told us that anyone else who has been frauded by Ethan and did not get their money back, should call them at 416-888-2222 and ask to speak to the communications department.

I submitted a CAFC Fraud Reporting System, file number 2020-142083

Nov 27 update: Ethan is still actively committing fraud on kijiji. Additional listing and chat conversation added at the bottom of this post.

A few weeks ago a friend asked Naomi and me for some advise on getting a second hand macbook laptop. After looking around for a while, a nice deal came up a few days ago that was slightly cheaper than most laptops. Sold by what looks like some small computer shop called “9067388 Canada LTD”. And thus begins the saga of Ethan Sin, A.KA. Ethan Singam, A.K.A. GEETHAN VANNIYASINGAM.

(Note this posting has been updated to redact the friend’s name)

The Kijiji ad that started this all was finally taken down on Nov 19 during the day.

https://www.kijiji.ca/v-laptops/city-of-toronto/2016-macbook-air/1535479433

I told my friend that we would drive up to her and be there with her to pick it up at the Tim Hortons, just to ensure the Mac was working and we could get her Apple ID on it – to ensure it was not some stolen device. My friend called us an hour later saying the seller asked her to etransfer $40 to show that she was really going to show up, since he had other people offering to buy it as well. She asked if that was safe. Note that etransfers here in Canada are linked to your bank account. So unless you are going to take money and leave Canada forever, you are not going to mess around with that. She also by now had his phone number (289-600-9580), so if this was fraud, he would also be burning his phone number. A new SIM in canada is still like $15 or so. Plus, we had an email address to etransfer the money to, ethansingam@gmail.com

It seemed this was safe. And a real fraud would ask for more than $40 right? Also, this kijiji account was a few years old. Petty criminals aren’t in it for the long game. They don’t setup accounts to commit fraud years later. So my friend etransfered the $40.

A little while later, he informed my friend that he was going to be a little late. Something with picking up his mother. Meanwhile, we all drove up (in separate cars, there is a pandemic going on) to the Timmies up north. The guy was late. Very late. An hour and a few excuses later, still nothing. We guessed it was a fraud after all. Time to do some research.

What does the Canadian Chamber of Commerce know?

The company associated with the kijiji ad was founded in October 2014, and dissolved for “non-compliance” in August 2019. It was located at 19-13085 Yonge St suite 211 in Richmond Hill. Their latest filing shows the only directory is Yuri Nogaev residing at 2220 Lake Shore blvd Suite 1601 in Toronto. Yuri was also a director for another company “National Water Comfort LTD”:

https://www.companiesofcanada.com/company/995158-0/national-water-comfort-ltd

This company, located at the same address, was also dissolved by the government for non-compliance. This company has one other directory along with Yuri, GEETHAN VANNIYASINGAM.

That seems to match up nicely with the email address the etransfer was sent to, ethansingam@gmail.com. Some further investigation gives us Ethan’s current adventure, Ethan Advertising Incorporated, which still has a website at www.ethanadvertising.com. Note this company is claiming to sell branded tote bags and the above screenshot on the laptop showed someone working on “bag” things.

The website does not list an address for the company, only a contact phone number of 289-779-5556, which differs from the one Ethan gave my friend (289-600-9580). The domain registration is not very useful, as GoDaddy does not reveal much and places itself as proxy in between. We see a domain creation date of Jan 20, 2020.

Let’s pull up some corporate information. There is also Athena Management Services Inc:

https://opencorporates.com/companies/ca/8167168

But other than some old address information, no interesting new information. But then we found Ethan Advertising:

https://opencorporates.com/companies/ca/11843290

It links to the government registration:

https://www.ic.gc.ca/app/scr/cc/CorporationsCanada/fdrlCrpDtls.html?corpId=11843290

Which in turns gives us a new address: 946 Wildwood Dr, Newmarket ON L3Y 2B5, Canada. And this postal code matches the kijiji ad location! He must still be living there.

Although at this point it seems unlikely he actually owns the laptop he claims to want to sell. I mean, if you do this fraud, you would just grab a random laptop image from the internet and put up a fake ad….. Or would you? A reverse image search on Yandex didn’t give us a matching photo, and we don’t have much other data to investigate. Except, there is that laptop screenshot in the ad. If you look at the background you see it contains a folder what appears to be someone’s resume:

[updated to redact the name of the person on the resume. Let’s call this person “Friend”]

Let’s see if we can find [Friend]. It seems to be someone located in Toronto according to Facebook: [facebook url redacted]

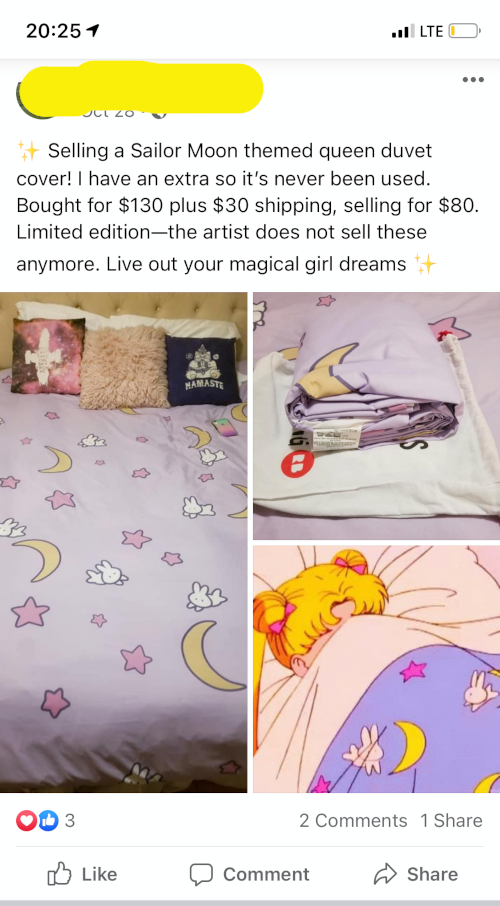

The last thing this person did publicly was post an ad to sell some – familiar looking – Sailor Moon bed sheets. Let’s compare the photos:

No way! The same sheets and pillows are visible in the laptop photos of the kijiji ad. So the guy committing a kijiji fraud did not pick a random internet image, but somehow ended up using a local image? Perhaps it was [Friend] who sold the laptop and the sheets?

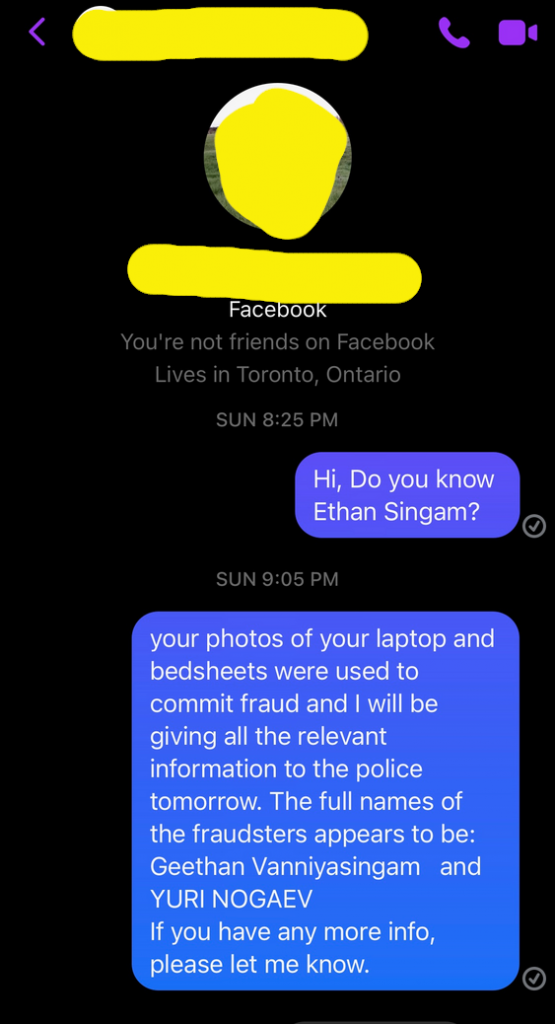

Naomi posted a comment to the facebook ad for the sheets with “Hi, do you know Ethan Singam“.

Since we had no indication she is related to Ethan, we decide to warn her and see if we could get a confirmation she sold that laptop ages ago by sending her a message.

We did not get a response. But shortly after we sent the message, the bedsheets that had been listed for sale for a month suddenly vanished. If this had happened to me, I would at least have replied with something and not just silently removed my stale for-sale post. Even if just to ensure it doesn’t look like I’m cleaning up my posting linking me to this guy that is committing fraud.

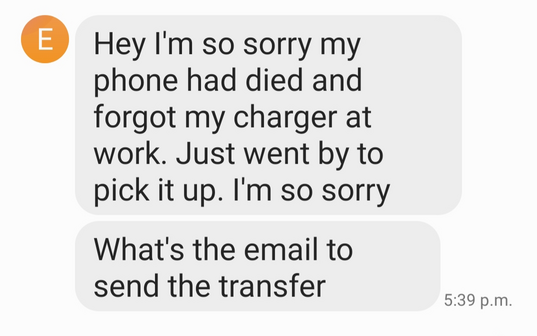

My friend obviously send a text message telling him she wanted her money back, and not expecting any further messages. The next day she received:

Seriously, the “I forgot my charger” excuse does not work anymore in a world where every phone is charged by Lightning or USB connector…

My friend did tell him her email address, even though he knows it as it is visible in the email interact transfer. So asking for the email address seems just a stalling tactic. Note that there is no word about the laptop sale. Perhaps he sold the laptop for more when someone offered more, or he decided to no longer sell it. But he doesn’t say anything like that. He is now just focused on returning the $40 without explanation of why his died phone prevented him from driving to the Tim Hortons. It’s as if there never was a laptop …..

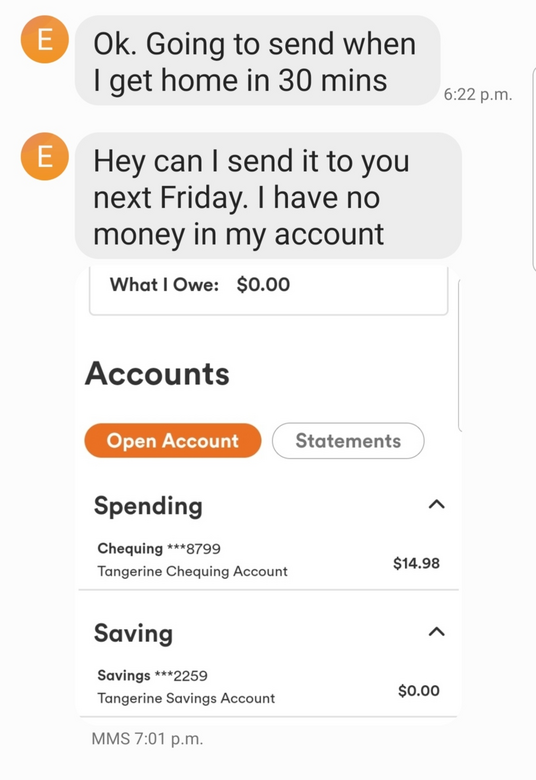

He replies:

He must have already spent most of his $40 that he got only a day ago. Not a big saver. Sending money with a Tangerine account is free, so we asked him to send the $14 now and the rest later. We kind of wanted to see if we could get a confirmation of his identity via the financial transaction in case he really got concerned about his unwise scheme unfolding as it did. But we didn’t have high hopes. And sure enough:

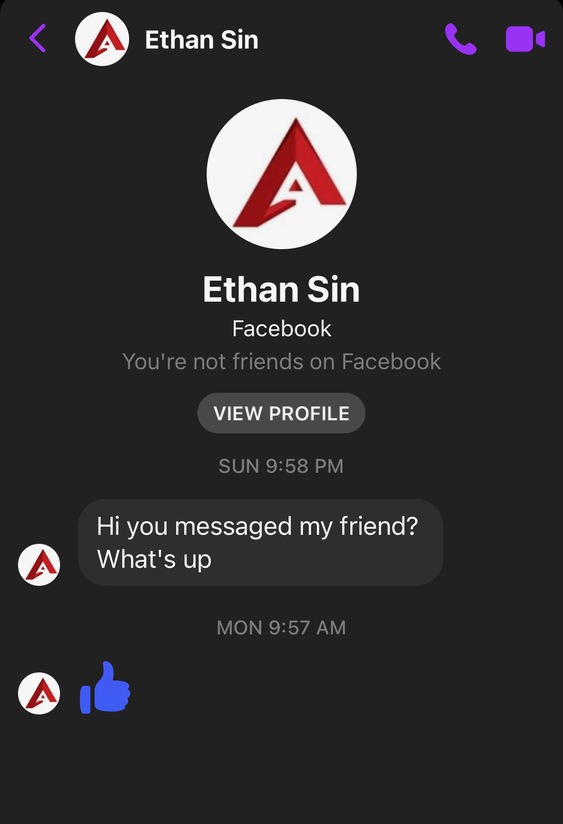

We are fairly confident the $40 won’t get returned next Friday or the Friday after that. This was surely where the story would end. Except it didn’t. A day later Naomi noticed that she had received this message on Facebook:

Look, this Ethan is using the same logo as Ethan Advertising. This account Ethan Sin was already active in 2018 and not just created for this response. Perhaps what happened was that [Friend] first saw the comment left by Naomi on the facebook ad for the sheets and reached out to Ethan, who replied right away to Naomi. Then [Friend] saw the message about fraud and quickly deleted the posting – and presumably told Ethan. But it was too late. He had already replied. And by contacting Naomi back, he confirmed Ethan, [Friend] and the laptop are all connected to each other.

I’m surprised the kijiji ad is still up. It has now been reported by my friend, by Naomi and by me as a fraud. Is he really making many times $40 on this fraud? Will he really pay the $40 back next Friday? Stay tuned! (it was taken down a day later)

UPDATE: Ethan did do this multiple times, Here is a link to a “Kijiji warning ad” from someone else who got scammed warning people about Ethan. If you got frauded, please feel free to contact me, paul@nohats.ca. !

UPDATE2: Combining the Facebook “read message” indicator timestamp with my server logs, we now know Ethan has read this blog post using his Rogers cell phone:

2607:fea8:560:3e00:7c83:a36c:388c:f195 – – [19/Nov/2020:11:43:38 -0500]

That should give the police another piece of information linking the person to the facebook account

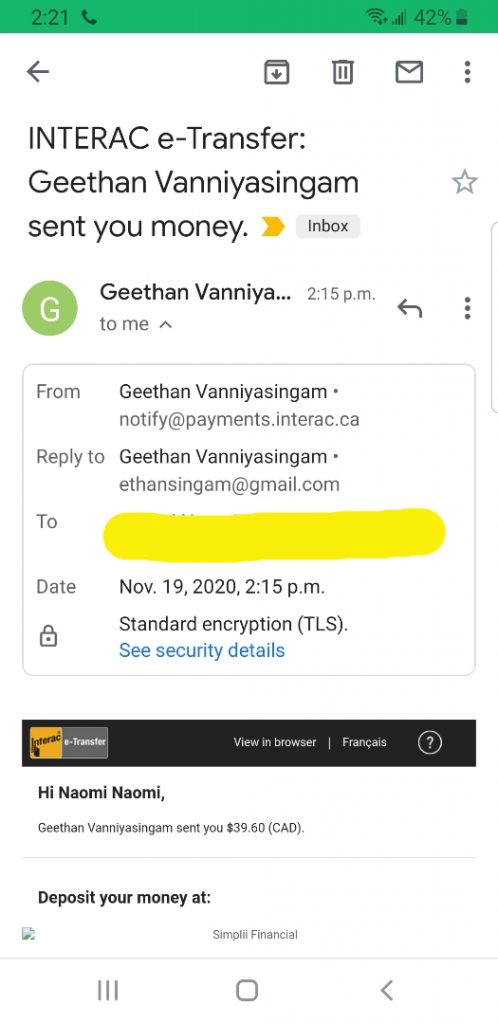

UPDATE3: Ethan was contacted by [Friend] whom I gave a link to this posting and this prompted him to contact me to ask to take the post down or at leats the friend’s details. He ended up paying back the money he stole (well, $39.60 of it) so I redacted his friend’s details. He or Kijiji took down the fraud posting. Oddly enough, he included his conversation with [Friend] as a screenshot, showing [Friend] saying “I thought you already sold your laptop”.

So I guess he really put it up for sale, and then smelled an opportunity to get some more money. I don’t know how much he frauded people, but if he only did this twice, he could have gotten the same amount of money legally by properly pricing his laptop’s market value (which was defintely $80 lower than the commonly used price by other sellers.

Poorly executed crime doesn’t pay

So here it is, the repayment in all its glory, including the mixup of name and being $0.40 short:

UPDATE4: We now have heard of a third person that was frauded for $100 by Ethan who shared some screenshots with us:

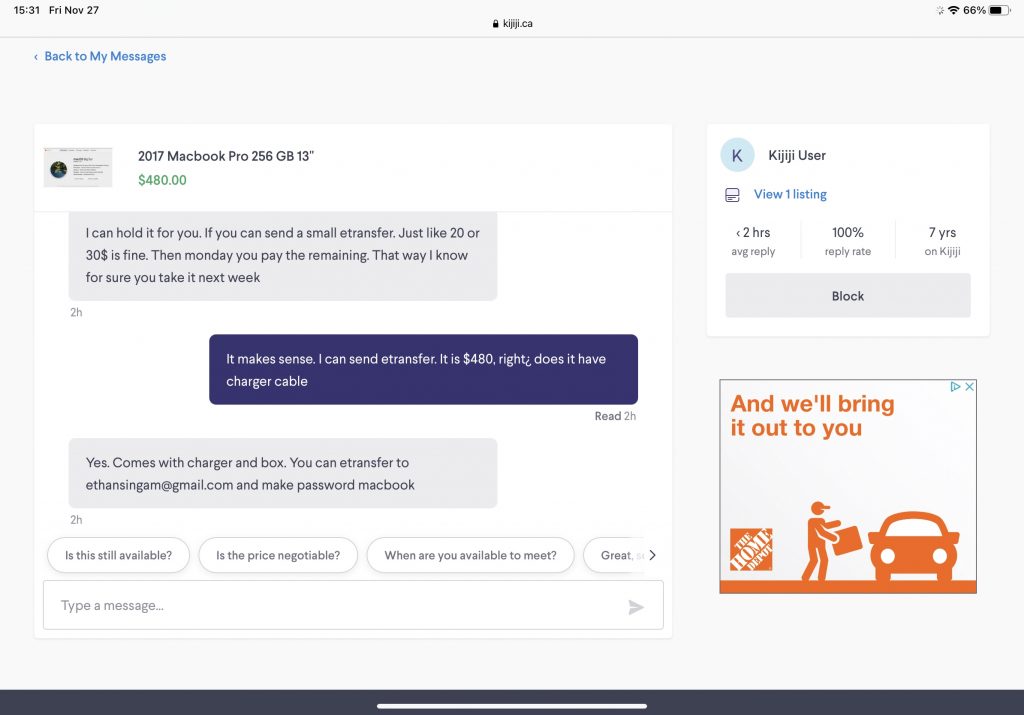

November 27: Ethan is still doing his fraud scheme. This time as a different (old) kijiji account with no identifying name. And since he already pulled the listing, it’s hard to identify this account. The original kijiji posting was https://www.kijiji.ca/v-view-details.html?adId=1538151094

His typical “give me some money to reserve it” scheme on this entry submitted to me by another user who was smart and googled for the etransfer email address first (and found this blog post):

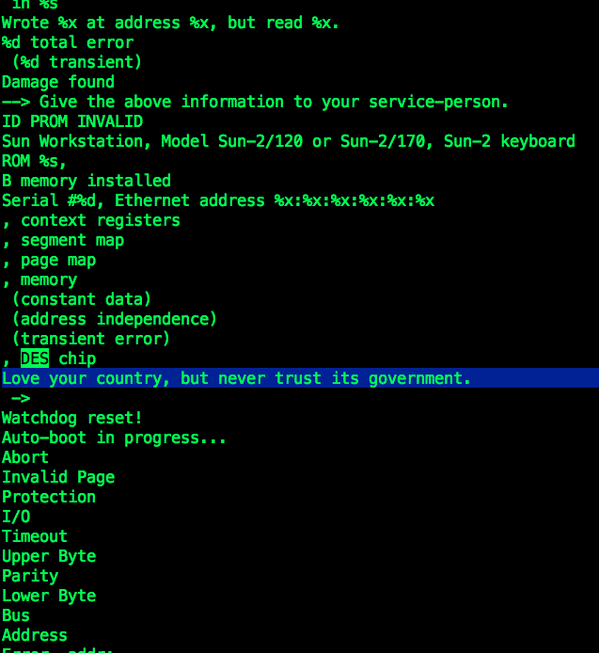

(UPDATE: Sevan Janiyan pointed out the Sun 2 was not a SPARC system)

Yesterday, Alec Muffet posted a few tweets regarding the Sun SPARC 2 bootloader’s DES routines.

Alec figured that message was never supposed to be seen and suggested it was a kind of silent protest of someone in Sun against the US Government. I replied, saying I was pretty sure such a message anywhere in the Sun bootprom code must have originated by John Gilmore. So I asked John, and he did not disappoint. This is what I wrote me back:

> That must have been you? :)

Yes. Vinod Khosla, first President of Sun, came to me at one point

and said to put something hidden, triggered in an unexpected way, into

the ROM Monitor, so that if somebody cloned the Sun Workstation

(violating our software’s copyright), we could do that unexpected thing

to the competitor’s demo workstation at a trade show and thereby prove

that they had cloned it.The ROM Monitor command that printed “Love your country, but never

trust its government.” was “k2” followed by control-B and RETURN. (To

get to the ROM Monitor from the running Unix display, you had to hold

down the L1 key and hit “A” then release both; the monitor had special

code looking for that key sequence.) I had found that saying years

before on a hand-painted sign tacked up on a pole or tree in central

Pennsylvania, wrote it into one of my notebooks at the time, and

plucked it out as the hidden thing after Vinod asked. In the source

code it was obscured as a set of hex numbers, but in the binary it was

visible. (I didn’t bother to XOR it with something to make it more

hidden.)The circumstance Vinod was concerned about never did come about;

nobody stole the Sun boot roms. Early 680x0s didn’t come with a

standard MMU, so everybody who wanted virtual memory had to invent

their own, and our roms were very tied to our custom static-ram based

zero-wait-state MMU, which was also patented. A few companies

licensed the board design from us, like Imagen for a laser printer

controller, but they had a license to use the ROMs.The DES chip slot was intended to speed up DES for high security

networking applications, and we did get it working. I think Bill Joy

was the one who made it a part of the architecture, all the way

through the Sun-3, thinking that we’d use it as part of securing the

network file system. But the chip was expensive and

export-controlled, and our software never really used it (NFS ran in

plaintext and used the sender’s IP address for authentication!). So

it didn’t get stuffed on the production boards, and eventually they

stopped stuffing the support chips too. Tom Lyon wrote a nice device

driver for it, and a “des” user command that would use the chip for

file encryption if it existed (or fall back to software).

In one 68010 based model we also put in a slot for an Intel floating

point chip — I think the one that came with the 80286. We got it

working (I did the initial debugging) and fed it commands and

arguments via manual peek/poke in software. Doing that was somewhat

faster than the software floating point that we were otherwise using,

despite the overhead. But as I recall, Intel wouldn’t sell us the

chips in volume, because they didn’t want to make the 68000 more

competitive against their own x86 chips. So we had to wait til the

68020/68881 came out (Sun-3 era) before we had fast floating point.It’s nice that Matt Fredette made a Sun-2 emulator. I may have

images of old SunOS release tapes that might work in it. And I have a

Sun “FE Handbook” for the field engineers, that has a lot of the

details about what chips go where, what the jumpers on each board

mean, what the memory map of each board looks like, and etc.You can forward this info on to whoever…

John

I wrote an article over at the Red Hat Security blog about the SLOTH attack and IKE/IPsec.

So the weakdh.org article got some renewed press again, and it keeps coming up with this rather misleading paragraph:

We further estimate that an academic team can break a 768-bit prime and that a nation-state can break a 1024-bit prime. Breaking the single, most common 1024-bit prime used by web servers would allow passive eavesdropping on connections to 18% of the Top 1 Million HTTPS domains. A second prime would allow passive decryption of connections to 66% of VPN servers and 26% of SSH servers. A close reading of published NSA leaks shows that the agency’s attacks on VPNs are consistent with having achieved such a break.

That’s a rather bold statement. How do the authors know the MODP groups of 66% of VPN servers? This is what they write in their research paper:

We measured how IPsec VPNs use Diffie-Hellman in practice by scanning a 1% random sample of the public IPv4 address space for IKEv1 and IKEv2 (the protocols used to initiate an IPsec VPN connection) in May 2015. We used the ZMap UDP probe module to measure support for Oakley Groups 1 and 2 (two popular 768- and 1024-bit, built-in groups) and which group servers prefer. To test support for individual groups, we offered only the single group in question. To detect default behavior, we offered servers a variety of DH groups, with the lowest priority groups being Oakley Groups 1 and 2.

The first problem is that the first packet exchange performs the DiffieHellman and no IDs or credentials are sent. This means that the server in question does not yet know which configuration this connection maps to, unless it is limited by IP. If it is limited by IP, then their probe likely did not even solicit an answer because they came from the wrong IP – Cisco VPN servers tend to not give error messages and will just drop the packet. If the configuration is not limited by IP, because the connection supports roaming users, then the VPN server cannot yet reject the connection based on a weak MODP group. Imagine the following configuration (in SWAN ipsec.conf syntax):

conn regularusers

left=my.ip.address

right=%any

rightid=%fromcert

ike=aes256-sha1-modp1536

conn oldcisco

left=my.ip.address

right=%any

rightid="CN=knownweakdevice.com, O=OldVendor, C=CA"

ike=aes256-sha1-modp1024

When the weakdh people’s scanner showed up, it allowed the initial request/response packet to be 1024. But once it got further into the negotiation, if would authenticate and identify the remote peer, and refuse to establish the IKE connection with the modp1024 group for everyone except the one known buggy device. But since the scanner had no credentials, it never got that far. So this is a false positive. This is how freeswan and openswan worked, and how libreswan works. When it receives more information, it tries to switch connection to the best matching connection. Note that with only the above two configurations enabled, libreswan would immediately reject an incoming IKE exchange that tries to use modp768 or modp2048, since no loaded connection specifies that modp group. Note the default when not specifying an IKE line is modp2048 and modp1536 for IKEv2, and modp1536 and modp1024 for IKEv1. With a preference for the higher group.

This method of connection switching requires the IKE daemon to know or go through all the connections to look at the modp group. If I understood the strongswan implementation correctly, it does not even bother to reject the incoming request based on modp group. It will just answer whatever the modp group is on the first packet. Later on in the exchange, once it has committed to a specific configuration, it will check for the modp group and reject the connection. So that is false positive number two for the scanner.

The article continues:

Of the 80K hosts that responded with a valid IKE packet, 44.2% were willing to accept an offered proposal from at least one scan. The majority of the remaining hosts responded with a NO-PROPOSAL-CHOSEN message regardless of our proposal. Many of these may be site-to-site VPNs that reject our source address. We consider these hosts “unprofiled” and omit them from the results here.

Well, that means the authors just excluded all freeswan/openswan/libreswan servers that are configured to not allow modp1024 because that is exactly what they would return. Libreswan per default does not allow modp1024 in IKEv2, so this is a rather big false negative!

We found that 31.8% of IKEv1 and 19.7% of IKEv2 servers support Oakley Group 1 (768-bit)

In our sample of IKEv1 servers, 2.6% of profiled servers preferred the 768-bit Oakley Group 1

Now regardless of the strong connections the authors missed, they did find a large number of weak ones. There is no excuse whatsoever for an IKEv2 server to allow modp768 or modp1024. It’s even questionable to allow modp1536 because every IKEv2 implementation supports modp2048. The 31.8% of modp768 is also inexcusable. The freeswan/openswan implementations never supported modp768 unless you specifically recompiled it (and the distro versions never had it compiled in). libreswan removed the compile time option altogether. The modp1536 group made it into freeswan – and preferred over modp1024 – before the modp1536 group was actually standardized in an RFC! So unless you specifically configured any of the *swan IKE implementations to use these weak groups, and your remote clients didn’t start out with a weak group, you would be using modp1536. Even back in 1997. This has not been an IKE problem in opensource IKE software ever. But, there is a catch.

The insecure Cisco administrator

86.1% and 91.0% respectively supported Oakley Group 2 (1024-bit)

This statistic matches my 15 year experience with IKE very well. It is very unfortunate that administrators fear reconfiguring a Cisco’s IPsec configuration. As such, when you need to interop with a Cisco, the other party usually has a SOP document that tells them how to configure the Cisco for use with 3DES-SHA1 modp1024. You are lucky if you are not forced to use md5. This is not a technical problem – Cisco VPN Concentrators can do modp2048. It is the humans that are afraid to touch something they are not familiar with that works.

MODP groups in the near future

The ipsecme working group at the IETF is actually working right now to update the set of mandatory to implement algorithms. This also includes promoting new algorithms to SHOULD or MUST and demoting others to MAY or MUST NOT. But it will only do so for the IKEv2 protocol. Most vendors have locked their IKEv1 stacks and will not make any changes to it anymore. So IKEv1 will always stay using modp1024 and modp1536. So if you can, use IKEv2.

Conclusion

The 66% of VPN number is surely very wrong. Measuring that number is per definition impossible, due to the common implementations of IKE daemons that hide the acceptance and rejection of the modp groups from un-authenticated IKE clients. However, I do agree that there are too many old IKE servers deployed that need to have their configurations upgraded to allow stronger modp groups and stronger encryption protocols.

If you want to go beyond modp1536, you should really upgrade to IKEv2. You will also need to move to IKEv2 if you want to move from AES-SHA1 to AES_GCM (or soon chacha20poly1305). Any IKE implementation that does not support IKEv2 is not actively maintained and should not be used.

See also my earlier article weak DH and LogJam attack impact on IKE

UPDATE: A more detailed explanation of the NO_PROPOSAL_CHOSEN case

The way IKE is specified and the way it works in practice is slightly different. The theory of operation is that a client starts with a list of proposals, that can include multiple DH modp groups. But that initial packet already contains the KE payload for one of the DH modp groups. So the client might send a proposal set containing 1024 + 1536 + 2048, but it only sends one KE payload. That KE payload basically denotes the client’s default. In this example, that would be either 1024 or 1536 or 2048. If the server receives this KE + proposal, it needs to make a decision. If the KE modp group is acceptable, use that. If not, then look if the proposal contains any other modp groups it is willing to use. If it does, it should return INVALID_KE with a payload of the acceptable modp group to use. The client receiving this can then select the other modp group it is willing to use and start the IKE exchange from scratch with a new request. (It’s a little more complicated because this reply is unauthenticated, so the client might wait for a bit to see if a “real server” accepts its modp). In practise, this often does not work. For example iphone ios8/ios9 do not process INVALID_KE payloads at all, as they assume a single correct modp group is configured on both sides using their enrollment program. So in practise, the server will accept the client’s DH if it is one of its allowed groups. This can mean that even though client and server both can do a more secure DH group, they end up using the most commonly used shared group. This is usually 1536 or 1024 for IKEv1 and 2048 for IKEv2.

An additional issue with IKEv1 is that the first packet also contains the OAKLEY_AUTHENTICATION_METHOD. If this is mismatched (eg PSK vs RSA) the IKE server will also return NO_PROPOSAL_CHOSEN.

And both both IKEv1 and IKEv2, the initial packet contains encryption/integrity algorithms too. If these mismatch (eg AES vs 3DES or SHA1 vs MD5) then the IKE server will also return NO_PROPOSAL_CHOSEN.

You can test the NO_PROPOSAL_CHOSEN return directed at vpn.nohats.ca. It is configured to accept connections from anyone, but requires modp1536 for either IKEv1 or IKEv2, using X.509/RSA authentication. You can send it PSK requests for modp1024 and get NO_PROPOSAL_CHOSEN.

A large dutch ISP ran into issues with their Registrar Network Solutions over the weekend. (why anyone would be using NetworkSolutions like it is 1993 is beyond me)

A misunderstanding over a payment caused hundreds of domains to be entered into PENDING-DELETE state. One such example is puiterwijk.org. Some of these domains have been in this state now for days.

What made things worse is that said NetworkSolutions took over running DNS for these domains, including an MX record that points to an actual mail server!

So if you were lucky, your email just bounced. If unlucky, someone else got your emails. The TLS certificate on that mail server doesn’t even match their hostname, so anyone on path can also just MITM it and a traceroute shows 26+ hops all over the place.

However, they did not modify the DS records after taking over the NS records and MX/A records. So those domains that used DNSSEC, including the above mentioned puiterwijk.org, are not resolving at all because the validators are rejecting the NetworkSolutions stolen DNS zones as bogus. So, my emails to this person were not delivered to the rogue MX servers because both he and I deployed DNSSEC. Hoorah, I guess….

Now, taking over MX and causing email failures like this is pretty evil. I would hope this violates some ICANN or PIR agreement but as said Registrar has been a sad registrar since uhm about 1993, I guess nothing is going to change. Let’s hope the Dutch ISP has learned from this and will move to another Registrar soon after this mess gets resolved.

TL;DR The LogJam downgrade attack does not apply to MODP groups in the IKE protocol, only to TLS, so IKE or IPsec is not impacted.

If you are using libreswan you are not vulnerable to weak MODP groups and using MODP2048 per default unless specifically configured for a lower MODP group.

If you are using openswan with IKEv2 you are using MODP2048, but if you are using IKEv1 you are using MODP1536 which is still much stronger than MODP768 or MODP1024.Libreswan as a client to a weak server will allow MODP1024 in IKEv1 as the least secure option, and MODP1536 in IKEv2 as the least secure option.

Openswan does not properly implement INVALID_KE, so it cannot connect to another DH group than the one it started out as, so it runs the risk of getting locked out if the server side bumps their minimum MODP group to 2048. openswan defaults to MODP1536 in IKEv1 and MODP2048 in IKEv2

(minor update: Hugh Redelmeier corrected me that freeswan never supported modp768 – the smallest modp was 1024)

A bunch of smart crypto research released a paper describing the LogJam attack. It is a cryptographic attack on weak DiffieHellman groups. It talks about DH in various protocols. It is an interesting read. When you break the DH layer, you obtain the secret session keys needed to decrypt the various protocol (TLS, IPsec, SSH, etc) sessions. The conclusion from the paper is that any DH group smaller than 1024 should not be used as even non-nationstate attackers can obtain the resources needed to break these. They further conclude that they believe it very likely that the standarized 1024 bit DH MODP group has been broken as well by the NSA. It involves large scale computing clusters and sieving and doing a lot of heating up the planet. They can do this because in crypto standards, everyone uses the same MODP prime values for the DH exchange.

First, a small clarification. When the paper talks about IPsec, they mostly mean the IKE keying protocol, not the ESP packet encryption protocol. IKE is responsible for the secure keying operation that generates symmetric session keys used for the actual packet encryption in the ESP protocol. IKE is the userland daemon, ESP is the kernel level encryption/decryption.

The DH values for IKE are listed in various RFC’s, and can be found in the IANA registry for the older (but more used) IKEv1 GROUP values and for the newer but less used IKEv2 GROUP values. For the published attack, the only type of groups relevant are the MODP groups. The ECP groups do not use exponentiation and thus are not vulnerable to factoring primes.

IKEv1 MODP groups

In IKEv1, the most common modp groups are modp1024 (DH2) and modp1536 (DH5). Some people still want to use modp768 (DH1) but it has strongly decreased over time. Most IKEv1 stacks also support modp2048 (DH14) up to modp8192 (DH18) although notably Cisco administrators in general seem to have stuck with modp1024 and modp1536, just as they have stuck with 3DES and MD5/SHA1. This is mostly because the technical knowledge to configure Cisco’s for VPN is so difficult, that once a Standard Operating Procedure(SOP) is in place, no one wants to, or is allowed to, modify the configuration used. (It’s also the tail end of Cisco asking for extra money to support AES many years ago)

FreeS/WAN never supported modp768 – John Gilmore had good ideas about scaling and NSA computing powers, he was one of the people behind Deep Crack, the EFF 1DES cracker. Openswan which was forked from FreeS/WAN in 2003 had support for modp768 (and 1DES) but did not compile it in per default and neither did any of the Linux distributions that shipped it. But people needed it frequently enough to interoperate that the code was added, but disabled per default. Notably, embedded people using the *swan’s would enable it due to customer demand. It annoyed us so much that sometime around 2005 (I can’t find it in the changelog) we just ripped it out. Of course, it did not prevent people from patching it back in, which some did. Most likely, many unattended embedded devices out there are still doing modp768 bassed VPNs, and as LogJammer shows, that’s within reach of academia and banana republics – so count on these being decrypted routinely by the Five Eyes, Russia, China, and probably most other Western TLA’s.

The default IKEv1 modp group for openswan (and libreswan) was 1536. In July 2014, libreswan changed this to MODP2048. I believe openswan is still using modp1536 (and if you are still using openswan, I recommend that you switch ASAP, see this git commit activity comparison

IKEv2 MODP groups

IKEv2 has seen a very very slow start. I’ve only seen it really being deployed in the last two to three years. Most of these deployments use at least MODP2048, do not support MODP768, but are usually willing to do MODP1536 or even MODP1024. The latter is mostly done for fear of compatibility issues. But also to support an upgrade path for all devices. If your VPN connection supports “either IKEv1 or IKEv2” and your clients are still connecting with IKEv1, then most surely you will need to support MODP1536 and even MODP1024, or you will be losing your older VPN clients.

In April of 2008, as the then release manager of Openswan, we released version 2.6.12 which changed the IKEv2 default to MODP2048. This was a controversial decision – one developer quit the openswan project over this change because he deemed it would cause too many interoperability failures because many IKE implementations did not properly implement INVALID_KE renegotiation or simply did not support MODP2048. No problems emerged after the change, but most likely because simply no one but the certification checkbox people were even testing or running IKEv2 at the time. Since then, libreswan (but not openswan) has implemented proper INVALID_KE renegotiation.

RFC 5114 MODP groups

There is a semi-mysterious

The purpose of this document is to provide the parameters and test

data for eight additional groups, in a format consistent with existing RFCs.

It lists one new 1024 bit MODP group and two 2048 bit MODP groups, when we already had RFC 3526, which has MODP groups up to 8192 bit. The odd thing is that when I talked to people in the IPsec community, no one really knew why this document was started. Nothing triggered this document, no one really wanted these, but no one really objected to it either, so the document (originating from Defense contractor BBN) made it to RFC status. Due to this strange history, the RFC 5114 MODP groups are not included in any default proposal set and MUST be manually configured before libreswan will use these groups. We had to support these groups due to demands for RFC compliance and certifications.

IKE and IPsec are not that bad you know

Not that I want to go into a nahnahnahnahnahnah mode, but to those people that keep dissing IKE and IPsec, note that we were again not vulnerable unlike TLS. And when it comes down to cipher mode attacks, IKE has proven to be far more secure than TLS has been in the last three years. So please stop beating up on IKE/IPsec citing some old papers from the late 90’s. Kthanks

It’s easy to forward or like an article of which the title says something you agree with, without actually reading the content. It seems “DNSSEC has failed” is one of those that showed up in my twitter feed. It’s so defunct of facts, it’s pretty clear most people have just read the title and retweeted it. Hopefully, they will at least read this post before not retweeting it.

It starts comparing the DNSSEC protocol with the SSH protocol. It claims ssh just works. It fails to take into consideration that the two are completely different trust models. With ssh, you tend to own both end points. So there is no issue of PKI or key distribution or trust. And even so, I am willing to bet the author never actually checks his SSH fingerprints when connecting to new servers, and just depends on the ssh leap of faith. Well, security is easy when you can assume you are not under attack. Most ironically, the easiest and only cross-trust-domain SSH public key distribution system out there is the DNSSEC protected SSHFP record. The only other one I know of is GSSAPI and other hooks into centrally-managed systems – eg all systems under one administrative control. Trust is pretty easy when you centrally distribute trust anchors.

Let’s also not talk about the debian ssh disaster, which was a pretty spectacular failure compromising thousands of ssh servers. Let me conclude the ssh comparison with inviting the author to login to bofh.nohats.ca using ssh in a fully trusted matter. He will end up 1) calling me to verify the ssh host key, 2) trusting the SSHFP key in DNSSEC or 3) leap-of-faithing it and hoping he was not under attack.

Next, the author brings up the tremendously easy to use and success of TLS. Never mind that we still need to run SSL 2.x for WinXP compatibility, most servers run TLS 1.0 which is severely broken, we just had the TLS 1.2 resumption/renegotiation disaster, about 4 CA compromises in 2013, and browsers running to patchwork solutions like hardcoded certificate pinning and Certificate Transparency registries that uses “n of m” style “it is probably the right certificate” solutions. There are about 600 (sub)CA’s that can sign any TLS certificate for any domain on the planet, ranging from Russia, to Iran to the U.S – all parties that are known to coerce parties and with a heavy industrial espionage component.

If TLS was successful, port 80 would be dead. Port 80 is the proof that the TLS trust model has failed. It involves money and so these certifications are only available to corporations. While DV certificates are now basically free, they are also considered worthless (protection against passive attack only) EV certificates are now dropping in price – which will make them finally available for more people but unfortunately because of that also lose their value. And all of this security hinges on the (mostly hidden) CABforum and browser vendor decisions on which CAs to include, and which CAs not to include.

While on most proprietary OSes, these preloaded CAs are at least managed by the vendor, the situation in Linux is a disaster. OpenSSL doesn’t support blacklists, NSS does. GnuTLS I don’t even know. Where are these CAs and blacklists? Do you know applications using openssl for their TLS are using the same CA bundle as applications using NSS or GnuTLS? Only very recently has Fedora and RHEL moved to a system where this is guaranteed. Even worse, most python wrappers using TLS don’t even check anything of your TLS connection. Presenting the wrong certificate will just cause that software to continue. And then look at the latest Apple TLS bug where anyone could bypass all TLS checks completely. How the author can even point to TLS as a security success is a complete mystery to me. It’s the worst computer security disaster we ever created! Look at the amount of complete failure TLS deployments at SSL Pulse – 30% allows weak ciphers, 6% has a broken certificate chain, less then 30% supports TLS 1.2, 6% vulnerable to the renegotiation/resumption attack, 70% vulnerable to the BEAST attack, 13% vulnerable to the compression attack, 56% allow RC4 and 50% does not support PFS. And that according to the author is the “success” that DNSSEC should take a lesson from?

Having listed the “success” stories of ssh and tls, he than looks at DNSSEC. His first factual statement regarding DNSSEC is “Most resolving software supports DNSSEC, but none have enabled it by default“. He is wrong. I have been the package (co)maintainer of bind, unbound and nsd for Fedora and EPEL for many years. On Fedora and EPEL/RHEL the default install enables DNSSEC for all three, and has done so for many years – even before the root was signed with DNSSEC!

Next he claims “To enable DNSSEC we have a ‘tutorial’ by Olaf Kolkman which spans a whopping sixty-nine pages“. As I said, the author is wrong. Simply “yum install bind nsd unbound” and the software comes with dnssec enabled, using the root key as trust anchor.

He then points to a DNSSEC training that takes “multiple days” and claiming that shows the complexity. The same vendor lists a two day DHCP course, a two and a five day “plain DNS & bind” course, and a two and five day IPv6 course. Does that mean those technologies are all failures too because it takes so many days to teach a course about it? According to the author, DHCP would be a failure and no one can deploy it because it “requires” a two day training? It’s just simplistic cherry-picked FUD.

His next argument is “Furthermore, there are almost no simple instructions for enabling DNSSEC, especially none that use KSKs and ZSKs, and include instructions on how to do key rollovers.“.

First, let me ask you how you do an ssh host key rollover? How do you make that seamless so it won’t trigger anyone who ever connected before from seeing a changed key? Where do they go to confirm the changed key is installed by the administrator and not the result of a hacked server or connection? Does this mean ssh has failed too? Again, amusingly enough the only way to rollover ssh keys is piggybacking on SSHFP in DNSSEC or GSSAPI trust models. But I don’t see the author claiming ssh has failed because it cannot do rollovers on its own. More cherry-picking.

The reality is, ssh and DNSSEC can do fine without rollovers. Only TLS requires rollovers because the trust model depends on the fact that you regularly pay the trustees managing that whole disaster. But if you do want or need to do a rollover, with ssh you’re fucked, with TLS you have to pay and with DNSSEC you have a seamless transition method. Let me repeat that – only DNSSEC actually implements a free and seamless key rollover mechanism! So of course, the author just states it is too hard to use. But again does not compare it against TLS or ssh at all. Cherry-picking all the way down. And by the way, there are also good reasons why you do not need to worry about DNSSEC rollovers at all.

As I said before, DNSSEC is already “enabled”, so let’s interpret the author’s quest for “simple instructions to enable DNSSEC” to mean “how do I sign my zone?”. Let us assume he is running bind right now with his zone in /var/named/1sand0s.nl. The author now has to issue the following complicated sequence of commands:

$ dnssec-keygen -f KSK -a RSASHA256 -b 2048 -n ZONE 1sand0s.nl

Generating key pair........+++ ..+++

K1sand0s.nl.+008+01699

$ dnssec-keygen -a RSASHA256 -b 1024 -n ZONE 1sand0s.nl

Generating key pair........................................++++++ ..................++++++

K1sand0s.nl.+008+24363

$ cat K1sand0s.nl.*.key >> 1sand0s.nl

$ dnssec-signzone -o 1sand0s.nl -k K1sand0s.nl.+008+01699 1sand0s.nl K1sand0s.nl.+008+24363

Verifying the zone using the following algorithms: RSASHA256.

Zone fully signed:

Algorithm: RSASHA256: KSKs: 1 active, 0 stand-by, 0 revoked

ZSKs: 1 active, 0 stand-by, 0 revoked

1sand0s.nl.signed

Edit named.conf and load the file 1sand0s.nl.signed instead of 1sand0s.nl and you’re done. And whenever you change your zone, re-run that dnssec-signer command.

(NOTE: and as pointed out to me, if you don’t have zone edits every week, do run that signer command in a cron job weekly to refresh the DNSSEC signature records)

You can put this into a git hook and maintain the whole thing without ever realising there is a signing step in between. Hardly rocket science. I think we can argue whether or not this is harder or easier than creating a CSR for Apache, get it signed, and than installed (and too many people will forget to actually get the intermediate CAs installed in apache, and their deployment is actually even broken!)

The author’s issue with opendnssec is that it is “yet another daemon” using a “database backend”. I guess he is also not running postfix because that thing has more daemons than I can remember the names of. Opendnssec’s default “database backend” is sqlite. If you hate those, there is a lot of software you’ll going to have to purge from your servers. Sqlite is a perfect backend for small databases that are just too complex for simple text files. For those of us who don’t mind two extra daemons and some sqlite files, to sign your domain from scratch with opendnssec:

# yum install opendnssec

# systemctl enable opendnssec.service

# systemctl start opendnssec.service

# ods-ksmutil zone add –zone 1sand0s.nl –input /var/named/1sand0s.nl –output /var/named/1sand0s.nl.signed

# ods-signer sign 1sand0s.nl

Change named to load the .signed zone instead of the unsigned zone and done. Shockingly complicated! Unsuitable for “common engineers” according to the author.

To make these instructions complete, the last step is to send your DS record to your Registrar which involves using their custom webgui to send them the following data:

yum install ldns

dig dnskey 1sand0s.nl > 1sand0s.nl.dnskey

ldns-key2ds -n 1sand0s.nl.dnskey

And if you want to do all of this within the same bind daemon and without sqlite, you can use bind’s builtin inline-signing management features instead.

Conclusion

Saying the tools are there for ssh (which doesn’t have ANY rollover support) and tls (which over half the world has misconfigured regardless of rollovers) but not for dnssec, is really short-sighted and wrong. What the author has done is taken his prejudice, and written some cherry-picked text justifying it. The only credit I can give him, is that he did not have to rely on the FUD statements by djb.

(as sent to the cryptography mailing list)

In light of the NSA achievements, a few people asked about the FreeS/WAN IPsec OE efforts and whatever happened to it.

The short answer is, we failed and got distracted. The long answer follows below. At the end I will talk about the current plans that have lingered in the last two years to revive this initiative. Below I will use the word “we” a lot. Its meaning changes based on the context as various communities touched, merged, intersected and drifted apart.

NOTE: On September 28, 2013 there is be a memorial service in Ann Arbour for Hugh Daniel, manager of the old IPsec FreeS/WAN Project. Various crypto people will attend, including a bunch of us from freeswan. Hugh would have loved nothing better than his memorial service being used as a focal point to talk about “new OE”, so that’s what we will do on Saturday and Sunday. If you are interested in attending, feel free to contact me.

OE in a nutshell

For those not familiar with IPsec OE as per FreeS/WAN implementation. When activated, a host would install a blocking policy for 0.0.0.0/0. Every packet to an IP address would trigger the kernel to hold the packet and signal the IKE daemon to go find an IPsec policy for that destination. If found, the tunnel would be build, and an IPsec tunnel to the remote IP would be established, and packets would flow. If no policy was found, a “pass” hole was poked so packets would go out unencrypted. Public keys for IP addresses were looked up in the reverse DNS by the IKE daemon based on the destination address. To help with roaming clients (roadwarriors), initiators could store their public key in their FQDN, and convey their FQDN as ID when performing IKE so the remote peer could look up their public key in the forward DNS. This came at the price of two dynamic clients not being able to do OE to each other. (turns out they couldn’t anyway, because of NAT)

What were the reasons for failing to encrypt the internet with OE IPsec (in no particular order):

1) Fragmentation of IPsec kernel stacks

In part due to the early history of FreeS/WAN combined with the export restrictions at the time. Instead of spending more time on IKE and key management for large scale enduser IPsec, we ended up wasting a lot of time fixing the FreeS/WAN KLIPS IPsec stack module for each Linux release. Another IPsec stack, which we dubbed XFRM/NETKEY appeared around 2.6.9 and was backported to 2.4.x. It was terribly incomplete and severely broken. With KLIPS not being within the kernel tree, it was never taken into account. XFRM/NETKEY remained totally unsuitable for OE for a decade. XFRM/NETKEY now has almost all functionality needed – I found out today it shoudl finally have first+last packet caching for dynamic tunnels, which are essential for OE. Since the application’s first packet triggered the IKE mechanism, the application would start retransmitting before IKE was completed. Even when the tunnel finally came up, the application was usually still waiting on that TCP retransmit. David McCullough and I still spend a lot of time fixing up KLIPS to work with the current Linux kernel. Look at ipsec_kversion.h just to see what a nightmare it has been to support Linux 2.0 to 2.6 (libreswan removed support for anything lower then recent 2.4.x kernels)

Linux IPsec Crypto hardware acceleration in practise is only possible with KLIPS + OCF, as the mainstraim async crypto is lacking in hardware driver support. If you want to build OE into people’s router/modem/setup box, this is important, though admittingly less so as time has moved on and even embedded hardware and phones are multicore or have special crypto CPU instructions.

An effort to make the kernel the sole provider of crypto algorithms that everyone could use also failed, and the idea was abandoned when CPU crypto instructions appeared directly accessable from userland.

2) US citizens could not contribute code or patches to FreeS/WAN

This was John Gilmore’s policy to ensure the software remained free for US citizens. If no US citizen touched the code, it would be immune to any presidential National Security Letter. I believe this was actually the main reason for KLIPS not going in mainstream kernel, although personal egos of kernel people seemed to have played a role here as well. Freeswan people really tried had in 2000/2001 to hook KLIPS into the kernel just the way the kernel people wanted. (Ironically, the XFRM/NETKEY hook so bad, it even confuses tcpdump and with it every sysadmin trying to see whether or not their traffic is encrypted) I still don’t fully understand why it was never merged, as the code was GPL, and it should have just been merged in, even against John’s wishes. Someone would have stepped in as maintainer – after all the initial brunt of the work had been done and we had a functional IPsec stack.

In the summer of 2003, I talked to John and together we agreed it was time to fork. Openswan was born to clearly indicate US coders could contribute. However, at that point the (then crappy) FRM/NETKEY IPsec stack was there to prevent OE from working due to the missing first+last packet caching. The FreeS/WAN Project ended and Openswan continued. At first in good pace, but that later slowed down and OE was no longer its focal point. (Due to legal reasons, I cannot go into details regarding the openswan history)

3) Not using DNS without DNSSEC

There were various issues that caused DNSSEC to get massively delayed. We needed DNSSEC to secure our DNS based distributed public key platform. Although it would have worked fine to use DNS against passive attackers (NSA trawling), we believed it was principly wrong to trust cryptographic material that was untrusted and vulnerable against active attacks. So while the developers encouraged people to put keys in DNS even without security, no one else picked it up. It sucks to need to say ‘we told you so’. But we should have really not waited on DNSSEC.

4) Dealing with the DNS working groups at IETF

The DNS community is one of the most pedantic group of people I know. They are very smart, often right, and had been known to be extremely defense of their DNS turf. (Note that things have improved considerably and if you think this is still an issue, I’m happy to try and help)

IETF was divided about the convergence of the “security of the DNS” and the “DNS as PKI” despite that this had always been a goal of DNSSEC for a large group of people within the IETF. The FreeS/WAN people were driving DNSSEC not so much for DNS as for the key distribution. After all, you can detect DNS forging if you know your public keys.

When we had the KEY/SIG records ready to go, it was decreed that it could only be used for the DNS itself. Applications could not use this KEY record. To make that distinction more clear, on the next change in the draft protocol, KEY was obsoleted and DNSKEY introduced. So IPsec keys were relegated back to TXT, since at the time we had no Generic Record format (RFC 3597) support, so waiting for any new RRtype to get any deployment to become usuable would take years. Almost everyone was on bind4 and never upgraded left us with no other choice but the TXT. Even though we wrote the OE and IPSECKEY RFCs, OE’s only deployments were done using TXT records.

5) DNSSEC was delayed by a decade

DNSSEC deployment was slowly gaining traction, but I think we really needed the Kaminsky bug to get that extra push for DNSSEC outside the geeks of the IETF. The US government mandate for DNSSEC in .GOV helped as well. But by this time, OE was mostly forgotten.

djb repeatedly tried to peddle his own warez. While not at all realistic, it always gained a lot of hype and media attention and probably did cause delays of DNSSEC deployment.

Kaminsky himself was shooting down DNSSEC too. I personally heckled him at various Black Hat’s and ICANN conferences until we finally sat down for a couple of hours to talk about DNSSEC’s history and design goals. I’ll claim my 15 minutes of fame for having converted him. It helped having Kaminsky say that although he didn’t like the complexity, he couldn’t see anything better. DNSSEC was needed for everyone.

DNSSEC was gaining traction. Then we ran into a bunch of DNSSEC deployment issues. We had the delays due to NSEC vs NSEC3 with OPTIN, and then on top of that in 2008 when the first big ISP in Sweden turned on DNSSEC in their resolvers all that traction was blown away.

Most consumer routers ran DNS proxies that implemented DNS as “known bitstreams” instead of implemeting the actual DNS protocol. The DNSSEC OK bit caused thousands of routers to drop DNSSEC packets as “invalid DNS”. The only realistic solution: Turn it off and wait two years for those routers to get obsoleted by faster wifi standards and talk to those vendors so they would not repeat their mistake with their next generation of routers.

We now have the IPSECKEY record format (though RFC 4025 is not useful, see below) and RFC 3597 for the generic DNS record deployed on all DNS servers. And we’re on our way to have DNSSEC on every end node (see also draft-wouters-edns-tcp-chain-query-00 I just submitted to the IETF)

We have a mostly clean working UDP/TCP port 53 transport for DNSSEC on most networks (in part thanks to Google DNS). Although our hotspot handling is still a little rough, with dnssec-trigger the only tool to hack configurable DNSSEC support into the OS for our coffee shop visits when we need to rely on forged DNS.

6) When you’re NAT on the net, you’re NOT on the net.

Opportunistic Encryption relied on a clear peer to peer connection. But we managed to degrade the internet into servers and clients. NAT was the biggest problem, and with CGN around the corner, it’s not something that is going away despite IPv6 offering enough IPs for everyone. In fact, for our “new OE”, this is the biggest hurdle to overcome. When Alice cannot talk to Bob because she cannot reach him due to a (carrier grade) NAT, we are stuck wildly poking holes and hoping packets flow.

7) The reverse DNS tree is dead Jim

OE depended on the reverse tree as a security mechanism that someone who was claiming a public key for a specific IP range was actually the legitimate owner of that IP space. It was the security method for RFC-4025.

But unless you are running in a datacenter, you do not have access to the reverse DNS. It is useless as key distribtion method. On top of that, large IPv6 deployments don’t even care any more to run any authoritative DNS for their reverse.

8) BTNS

The IETF tried to revive this OE with the Better Then Nothing Security (“BTNS”) working group. Contrary to the name, they also fell into the “perfect is the enemy of good” trap and most discussion seemed to go into “channel binding” to upgrade anonymous IPsec to some kind of authenticated IPsec – at least by the time I became aware of them. In other words, the most important problem of key distribution was left outside the scope and no one actually seemed to have implemented anything. Though I have to admit, I’m behind on reading the VPN auto-discovery drafts. It is just

very discouraging to still be reading problem statement drafts. More over, I don’t think we should setup IPsec tunnels based on packets hitting the kernel. We have better ways now that we can leverage DNSSEC.

9) We were all complacent

The only interest for IPsec was for corporate VPNs. During the above listed problem periods, OE people gave up. Some walked away from IETF. While everyone gained an always-on portable IP device,

their crypto capabilities were practically non-existent. My current iphone 5 can connect to a corporate VPN, but trying to make it _just_ send out encrypted packets is impossible. Some trickery can be used to cause almost any packet to setup the VPN, but while that’s going on it is still leaking like a sieve. VPN is seen by phone vendors as a method to gain some enterprise users, not as the tool to protect the consumer. The Apple VPN client is a 10+ year old patched version of racoon. The only vendor that took VPNs seriously was RIM and we punished them by not buying their products, because we had other priorities like FourSquare, Facebook and Twitter.

We can only hope that those PRISM players are now put under economic pressure by frightened consumers to fix this. But as long as VPNs and DNSSEC is slow and error-prone, it is better for them not to go there.

The New Opportunistic Encryption

I’ve been brainstorming with various people on how to put IPsec OE back on the table. I’ve discussed this with a bunch of people around me, including the late Hugh Daniel, John Gilmore and Hugh Redelmeier of freeswan.

The packet capturing 0.0.0.0/0 policy is not a good method because we cannot make any decision on where to find a public key for an IP address. The reverse is unusable, and IP addresses change often. We used it because we had nothing better. But now we do. Since every (secure) platform now runs DNSSEC on the end node, we can use this as our decision making point. Imagine my phone running a DNSSEC resolver (say unbound) and an IKE daemon (say libreswan). The DNS server has access to the set of DNS name and matching IP address. It can lookup the key in the forward DNS zone, and hand over the public key, dns name and IP address to the IKE daemon!

1) User tells browser to go to www.cypherpunks.ca

2) browser does a lookup for the A/AAAA record of www.cypherpunks.ca

3) DNSSEC resolver performs the lookup/validation for the A/AAAA record of www.cypherpunks.ca and additionally looks up the IPSECKEY record of www.cypherpunks.ca.

4a) The resolver will wait with returning the A/AAAA record to the browser until it knows if the IPSECKEY record exists or not. If not, it releases the A/AAA answer to the application. Packets flow in the clear.

4b) The resolver finds an IPSECKEY record. It sends the pubic key, the FQDN and the IP address(es) to the IKE daemon and waits for a response. Meanwhile it does _not_ release the A/AAAA record to the application.

5) The IKE daemon sets up the IPsec tunnel. We haven’t reached agreement yet over how this should be done. There are two choices:

a) The client uses an “@anonymous” ID for itself along with sending its public key inline with IKE. The client is responsible for ensuring there is no MITM attack, as it knows the server’s public key (from DNSSEC). The responding server will just use any key it received inline if it was received for the “@anonymous” ID.

b) The initiator (aka client) uses its own FQDN-based ID. It has preconfigured its DNS so that an IPSECKEY record exists for its FQDN (protected by DNSSEC). The key is not send inline with IKE. Instead, when the responder (aka server) sees the non-anonymous ID, it will perform a DNSSEC secured lookup to obtain the IPSECKEY out of band. Both parties confirm there is no MITM.

The advantage of a) is that it leaks less user information and makes tracking users harder. The client can regularly generate another anonymous keypair. The disadvantage of a) is that it turns peers into clients and servers. And two clients cannot initiate OE to each other.

6) The tunnel is established and the IKE daemon notifies the local DNSSEC server that had instructed it to setup the IPsec tunnel.

7) The resolver releases the IP address to the application.

8) The applications starts sending packets and the IPsec policy encrypts them al.

I’m personally in favour of the @anonymous solution. But there is no reason why support for both could not be implemented.

What are some of the obstacles and work to do:

1) writing the unbound plugin

2) writing the support for @anonymous for the server-side. This includes raw keys for IKEv2 (draft-ietf-ipsecme-oob-pubkey)

3) With NAT, the client suggests an inner-IP. This could be abused or clash, We need to ‘contain’ each connection, possibly using generated ipv6 addresses 4) We cannot use the “gateway” field of RFC-4025, or people could trick a server into giving a client all communication to a certain IP address that does not belong to them

5) anonymous connections should generate throw-away keys to remain anonymous

6) implement draft-wouters-edns-tcp-chain or else latency/RTTs will prevent real-life deployment of DNSSEC validated IPSECKEYs on mobile devices.

7) This allows no upgrading from anonymous to mutually authenticated, but IKE policies can be added to the server/client that would match on different IDs (eg X.509) that work independantly of OE without introducing complicated channel binding promotion code. Other IKEv2 extensions could possible be applied to facilitate promotions.

I’m sure more implementation issues will show up once we get this going, but there are no real fundamental issues why we cannot deploy this in a couple of months of time. My plan is to get libreswan to support this version of OE. Additionally, once we use draft-wouters-edns-tcp-chain, it becomes cheap to do these lookups through the tor network. If the tor exit nodes then also feed each other with DNSSEC cache material, it should make tracing individual clients even harder.

(anyone willing to assist, especially with coding, do contact me)

Heml.is is a new instant message client promise that is “beautiful & secure” that seems to have cashed in on the current NSA scare, by lifting on the good (bad?) name of one of the Pirate Bay founders. Apparently, people have committed $150 USD for this in two days.

Clearly people believe hemlis will offer something that the 62 apps in the Google Play store, and over a 1000 apps in the Apple App Store don’t offer…..

What do they promise? Let’s first go through their claims in their video:

“no one can spy on you, not even us”

“only your friend can read what you write”

“based on end to end encryption”“We are building Heml.is on top of proven technologies, such as XMPP with PGP”

Great. So full trustworthy end to end encryption. Which means you can do this over ANY insecure transport mechanism. I could post those encrypted IMs on my blog – only the intended recipient would be able to decrypt it. Very secure. But it raises another question:

“We are building Heml.is on top of proven technologies, such as XMPP with PGP”

Great. So full trustworthy end to end encryption. Which means you can do this over ANY insecure transport mechanism. I could post those encrypted IMs on my blog – only the intended recipient would be able to decrypt it. Very secure. But it raises another question:

“build as secure and as fast as possible a service”

Why do we need the hemlis XMPP infrastructure? As far as I can determine, the only reason for that infrastructure is to give the hemlis people an excuse for annual revenue. Why not let Facebook and Google pay to maintain that XMPP infrastructure? While I might not trust Google as an entity, I sure as hell trust Adam Langley’s TLS protocol choices that he can steer Google into using. So, what’s the hemlis excuse for running their own service:

“We will however charge a small fee (via an in-app purchase) to unlock certain features. Exactly which features is to be determined at a later time. We do this to fund the continuing development and infrastructure.”

“The way to make the system secure is that we can control the infrastructure. Distributing to other servers makes it impossible to give any guarantees about the security. ”

As the Islandic Penn & Teller would say (after talking to their lawyer) is: kjaftæði!

Didn’t they just say end to end encryption was going to be used? Who cares about the security of the transport if the message is securely end-to-end encrypted! Didn’t the hemlis people just claim the NSA and GHCQ could vacuum up a copy of my PGP encrypted chat message and they wouldn’t be able to decrypt it? If the end-to-end encryption works, there is no need to require a secure (hemlis run) XMPP network service network.

Centralizing is the wrong thing to do. By running their own hemlis XMPP service, they are actually making it easier for the NSA and GCHQ to get access to our (encrypted) IM data. And additionally, they are making it trivial for governments to just mandate a block of the hemlis XMPP network service. I can guarantee you that China will never even be able to see this network provided it actually launches.

Much better would be to allow people to completely decentralize the whole IM business by letting them running their own XMPP servers. XMPP is made to allow full decentralization. You can find my XMPP identity simply by looking for it based on my email address, paul@nohats.ca. Your IM client will be able to find the XMPP servers of the (very very Small) IM network run by “nohats.ca” via the SRV DNS records published in DNS.

“usually, security means complexity”

Finally a quote I can agree with. However, I’m already cringing and waiting for the padlock to appear. Security is not a binary toggle, so yes, representing a security state to an enduser who is not an engineer is a hard problem. There is a reason security is complex and OTR is one of the few protocols and implementations that has a very strong focus on usability. The developers tried to keep it as simple as possible, but everything about security cannot be reduced to a fucking padlock!

Promising a simple beautiful secure GUI is like promising world peace. It’s not the idea that’s impossible – it’s the implementation of that idea that is impossible.

(If you wonder why I hate padlocks so much just search for “otr padlock” to see the number of people suggesting to use padlocks in the OTR gui. Or search how Moxie basically killed the browser padlock by using sslstrip with a fav.icon).

PGP Key exchange

If it is not to facilitate the very securely encrypted PGP messages, then the only other thing left to protect is the exchange and verification of PGP public keys. Of course for that we have PGP key servers – although the PGP key servers suck. Knowing where to get PGP keys is not enough. For example, the key for assange@wikileaks.tld is (most likely) not a real key used by Assange. Someone dropped a key there in the hope that someone would use it to encrypt something for Assange. (Interestingly, since the “tld” domain does not exist, one has to mail it to one of Assange’s real email addresses, and I only know of a few parties outside of Assange that are guaranteed to get a copy of such an email. But what if hemlis solved this already through superb engineering skills? Well, then they have another problem: they won’t accept untraceable anonymous accounts.

Why not use OTR? Well, according to hemlis:

“Even though we love OTR it’s not really feasible to use in a mobile environment. The problem is that OTR needs both parties to be online for a session to start, but a normal phone would not always be online. It would not work at all for offline messages neither.”

Kjaftæði!

Let’s ignore the fact that 99.99% of phones are always on and always connected to the network, making this statement completely bogus to begin with. Let’s say Aðalbjör wants to send Björn an OTR message. If Björn is offline, then Aðalbjör cannot initialise an OTR session to establish a secure channel over which they can talk securely. Aðalbjör will have to queue up her message to Björn until he becomes available. How is this different from Björn’s client receiving the securely transmitted message while Björn is asleep and not reading it? The only difference is where the message was queued up. Aðalbjör and Björn actually never need to both be online at the same time to start or continue to use OTR. As the hemlis people themselves admit on the FAQ, the XMPP protocol allows for storing messages to offline people, and those messages include the OTR handshake messages to establish a secure connection! Remember, OTR is an inline encryption

protocol. It works fine with XMPP offline messages.

Will it be open source?

“We have all intentions of opening up the source as much as possible for scrutiny and help! What we really want people to understand however, is that Open Source in itself does not guarantee any privacy or safety. It sure helps with transparency, but technology by itself is not enough. The fundamental benefits of Heml.is will be the app together with our infrastructure, which is what really makes the system interesting and secure.”

Mæli kjaftæði! This is where the snake oil really burns bright! While I have no doubt that

the opportunity for hemlis to make money is “interesting”, when you start saying that your application cannot be fully open source because it would be a security risk, you’ve committed the gravest mistake in cryptography – security by obscurity. Hemlis is going to be blackbox security. They should have called it Clipper Hemlis!

Why is Heml.is different from WhatsApp, MessageMe, iMessage etc?

“Our focus is your privacy so we are building everything from software to company structure to protect that.”

If the security of the hemlis IM network depends on the company structure of three guys standing up to the billion dollar a year military industrial complex combined with the billion dollars a year entertainment industry, you better have a plan B. I’m not comfortable with a distance of three waterboarding sessions, six kneecaps or one extremely expensive lawyer.

Not to mention that IM network that hemlis needs to run. They say it needs an annual revenue to keep running. Just ask wikileaks how easy it is to keep donations or payments going when Mastercard, VISA and PayPal ban you.

Finally, this last FAQ from their site is also very intriguing:

“How will the codes, pre-register usernames and “My name in the app” work? Prior to the release of Heml.is all backers will get an email with their codes and instructions on how to proceed. “

Unfortunately, they are not giving out any details about “hemlis IM network user identities”. Probably because they have no fucking clue how to deal with it. Can I register “NSAgov”? wikileaks@nohats.ca”? “EdSnoden”? helpdesk@heml.is?

They said they were using PGP, so I expect some kind of web of trust leverage with @heml.is identities or something. I have no idea how they would scale that so I can easily and securely find out what hemlis identity Assange has. The PGP keyservers are a disaster. It’s a battle field of spam, malicious fake identities, bogus signatures, keys where the private key was lost forever, and probably a bunch of compromised keys too. And despite all of that, it still failed to scale, provide a unified interface to easily obtain and verify keys. PGP keyservers should be taken down – they are a security risk at best. What is hemlis going to do different? Require a confirmation email? We’ve seen how well that works with the various Certificate Agencies.

Summary

While I’m happy to be proven wrong, the inevitable conclusion for now is that an hysteric mob of people gave three guys $150K for complete vapourware. I’m not sure who is more happy – those guys or the NSA. Next time you find yourself with a strong desire to sponsor the Alliance in the Crypto Wars, consider donating to those people who are actually working on these problems. Give some money to the IETF, or one of the crypto opensource projects. Just don’t buy more snákaolía please.

For those who wish to send me hate mail after reading this, please concentrate all your anger and hate and torpedo my OPENPGPKEY and OTRFP drafts that I submitted to the IETF instead. These two drafts are aimed at using DNSSEC to make Email and Instant Messages scale against passive (and some active) attackers and will assist and making encryption (and authentication) the default mode of the internet for our personal and private content.

What’s left for Hemlis? A imagined beautiful interface based on a few photoshop screenshots and a logo.

Snákaolía